服务器环境:

共享存储:192.168.68.100 (NFS、MinIO)

主用集群:192.168.68.101

备用集群:192.168.68.102

部署NFS

#服务器端 192.168.68.100

yum -y install nfs-utils

systemctl enable rpcbind.service

systemctl enable nfs-server.service

systemctl start rpcbind.service

systemctl start nfs-server

mkdir /data/nfsdata

vi /etc/exports

/data/nfsdata 192.168.68.0/24(rw,sync,no_root_squash)

#客户端 192.168.68.101,192.168.68.102

yum -y install nfs-utils

showmount -e 192.168.68.100

mkdir /data/nfsdata

mount -t nfs 192.168.68.100:/data/nfsdata /data/nfsdata

chown -R tidb.tidb /data/nfsdata

#/etc/fstab

192.168.68.100:/data/nfsdata /data/nfsdata nfs defaults 0 0

部署MinIO

#服务器端

https://dl.minio.org.cn/server/minio/release/linux-amd64/

#客户端

https://dl.minio.org.cn/client/mc/release/linux-amd64/

#官网:https://www.minio.org.cn/

wget -O /usr/local/bin/minio https://dl.minio.org.cn/server/minio/release/linux-amd64/minio # 服务端

wget -O /usr/local/bin/mc https://dl.minio.org.cn/client/mc/release/linux-amd64/mc # 客户端

chmod 755 /usr/local/bin/{minio,mc}

[root@localhost ~]# minio --version

[root@localhost ~]# mc --version

创建数据目录、日志目录,添加用户:

mkdir -p /data/minio/{data,logs,backup,redo}

useradd minio && echo minio | passwd --stdin minio # 可选,创建普通用户来运行进程

chown minio.minio -R /data/minio/

创建启动脚本

su - minio

vim /data/minio/minio.sh

#!/bin/bash

export MINIO_ROOT_USER=admin

export MINIO_ROOT_PASSWORD=Admin@123

MINIO_DATA_DIR=/data/minio/data

MINIO_LOG_DIR=/data/minio/logs

MINIO_ADDR=0.0.0.0 #监听端口和地址

MINIO_API=9000 #数据服务端口,用于处理用户的上传、下载等数据操作请求

MINIO_UI=9001 #控制台服务端口,用于提供Web管理界面

case "$1" in

start)

nohup minio server ${MINIO_DATA_DIR} \

--console-address ${MINIO_ADDR}:${MINIO_UI} \

--address ${MINIO_ADDR}:${MINIO_API} >> ${MINIO_LOG_DIR}/minio-$(date +%Y%m%d).log 2>&1 &

;;

stop)

pid=$(ps -ef | egrep "minio[/]" | awk '{print $2}')

[ ! -z ${pid} ] && kill -9 ${pid} || echo "MinIO not running..."

;;

*)

echo "Usage: $0 {start|stop}"

exit 1

esac

启动服务

bash minio.sh start

访问页面:

http://192.168.68.100:9001/login (admin/Admin@123)

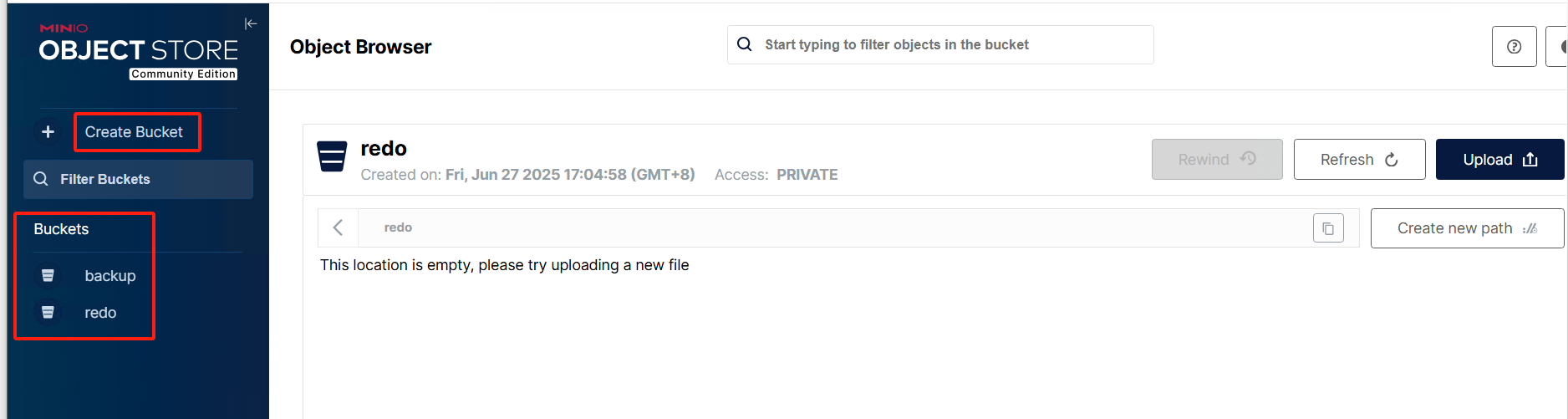

创建bucket:

在管理页面点击:Create Bucket 之后,输入名称创建:backup、redo

TiDB使用MinIO备份恢复示例

#备份

mysql> BACKUP DATABASE * TO 's3://backup/202507071609?access-key=admin&secret-access-key=Admin@123&endpoint=http://192.168.68.100:9000&force-path-style=true';

mysql> show backups;

#恢复

mysql> RESTORE DATABASE * FROM 's3://backup/202507071609?access-key=admin&secret-access-key=Admin@123&endpoint=http://192.168.68.100:9000&force-path-style=true';

mysql> show restores;

#连接MinIO

mc alias set myminio http://192.168.68.100:9000 admin Admin@123

mc ls myminio

mc admin info myminio

# mc mb myminio/mybucket02 # 创建bucket

# mc ls myminio # 列出bucket

# mc rb myminio/mybucket02 # 删除没有object的bucket

# mc rb myminio/mybucket02 --force # 强制删除bucket,即使含有文件

#级联删除目录

mc rm myminio/tidbbackup/bak_20210922/ --recursive --force

#递归强制删除8天之前的文件,单位支持天,小时,分钟等

mc rm myminio/tidbbackup/fullbackup/ --recursive --force --older-than 8d

#操作系统命令备份

export AWS_ACCESS_KEY_ID=admin

export AWS_SECRET_ACCESS_KEY=Admin@123

tiup br backup table --db * --table * --ratelimit 60 --pd "192.168.68.101:2379" --storage "s3://backup/202507071635" --send-credentials-to-tikv=true --s3.endpoint "http://192.168.68.100:9000" --log-file ./backup.log

备份和恢复

备份表 db23.t1,限速 60M

export AWS_ACCESS_KEY_ID=myminioid

export AWS_SECRET_ACCESS_KEY=myminioPassWord

/data/br_513/br backup table --db db23 --table t1 --ratelimit 60 --pd "192.168.1.25:2379" --storage "s3://tidbbackup/bak_20220105" --send-credentials-to-tikv=true --s3.endpoint "http://192.168.1.32:9000" --log-file /tmp/backup.log

恢复表 db23.t1,限速 60M

export AWS_ACCESS_KEY_ID=myminioid

export AWS_SECRET_ACCESS_KEY=myminioPassWord

/data/br_513/br restore table --db db23 --table t1 --ratelimit 60 --pd "192.168.2.25:2379" --storage "s3://tidbbackup/bak_20220105" --send-credentials-to-tikv=true --s3.endpoint "http://192.168.1.32:9000" --log-file restore.log

部署tidb主备集群

分别在101和102上独立部署tidb集群(pd,tidb,tikv,tiflash,ticdc,monitor,grafana,altermanager)。

topology.yaml (192.168.68.101:tidb851a)

tiup cluster deploy tidb851a v8.5.1 topology.yaml --user tidb -p

# # Global variables are applied to all deployments and used as the default value of

# # the deployments if a specific deployment value is missing.

global:

user: "tidb"

ssh_port: 22

deploy_dir: "/opt/tidb-deploy"

data_dir: "/data/tidb-data"

# # Monitored variables are applied to all the machines.

monitored:

node_exporter_port: 9100

blackbox_exporter_port: 9115

deploy_dir: "/opt/tidb-deploy/monitored-9100"

data_dir: "/data/tidb-data/monitored-9100"

log_dir: "/opt/tidb-deploy/monitored-9100/log"

server_configs:

tidb:

log.slow-threshold: 300

tikv:

readpool.storage.use-unified-pool: false

readpool.coprocessor.use-unified-pool: true

pd:

replication.enable-placement-rules: true

replication.location-labels: ["zone","idc","host"]

replication.isolation-level: "host"

tiflash:

logger.level: "info"

pd_servers:

- host: 192.168.68.101

name: "pd1"

client_port: 2379

peer_port: 2380

deploy_dir: "/opt/tidb-deploy/pd-2379"

data_dir: "/data/tidb-data/pd-2379"

log_dir: "/opt/tidb-deploy/pd-2379/log"

tidb_servers:

- host: 192.168.68.101

port: 4000

status_port: 10080

deploy_dir: "/opt/tidb-deploy/tidb-4000"

log_dir: "/opt/tidb-deploy/tidb-4000/log"

tikv_servers:

- host: 192.168.68.101

port: 20161

status_port: 20181

deploy_dir: "/opt/tidb-deploy/tikv-20161"

data_dir: "/data1/tidb-data/tikv-20161"

log_dir: "/opt/tidb-deploy/tikv-20161/log"

config:

server.labels: { zone: "zone1", idc: "idc1", host: "host1" }

- host: 192.168.68.101

port: 20162

status_port: 20182

deploy_dir: "/opt/tidb-deploy/tikv-20162"

data_dir: "/data2/tidb-data/tikv-20162"

log_dir: "/opt/tidb-deploy/tikv-20162/log"

config:

server.labels: { zone: "zone1", idc: "idc1", host: "host2" }

- host: 192.168.68.101

port: 20163

status_port: 20183

deploy_dir: "/opt/tidb-deploy/tikv-20163"

data_dir: "/data3/tidb-data/tikv-20163"

log_dir: "/opt/tidb-deploy/tikv-20163/log"

config:

server.labels: { zone: "zone1", idc: "idc1", host: "host3" }

tiflash_servers:

- host: 192.168.68.101

tcp_port: 9000

flash_service_port: 3930

flash_proxy_port: 20170

flash_proxy_status_port: 20292

metrics_port: 8234

deploy_dir: "/opt/tidb-deploy/tiflash-9000"

data_dir: "/data/tidb-data/tiflash-9000"

log_dir: "/opt/tidb-deploy/tiflash-9000/log"

monitoring_servers:

- host: 192.168.68.101

port: 9090

deploy_dir: "/opt/tidb-deploy/prometheus-8249"

data_dir: "/data/tidb-data/prometheus-8249"

log_dir: "/opt/tidb-deploy/prometheus-8249/log"

grafana_servers:

- host: 192.168.68.101

port: 3000

deploy_dir: /opt/tidb-deploy/grafana-3000

alertmanager_servers:

- host: 192.168.68.101

web_port: 9093

cluster_port: 9094

deploy_dir: "/opt/tidb-deploy/alertmanager-9093"

data_dir: "/data/tidb-data/alertmanager-9093"

log_dir: "/opt/tidb-deploy/alertmanager-9093/log"

cdc_servers:

- host: 192.168.68.101

port: 8300

gc-ttl: 86400

deploy_dir: "/opt/tidb-deploy/cdc-8300"

data_dir: "/data/tidb-data/cdc-8300"

log_dir: "/opt/tidb-deploy/cdc-8300/log"

topology.yaml (192.168.68.102:tidb851b)

tiup cluster deploy tidb851b v8.5.1 topology.yaml --user tidb -p

# # Global variables are applied to all deployments and used as the default value of

# # the deployments if a specific deployment value is missing.

global:

user: "tidb"

ssh_port: 22

deploy_dir: "/opt/tidb-deploy"

data_dir: "/data/tidb-data"

# # Monitored variables are applied to all the machines.

monitored:

node_exporter_port: 9100

blackbox_exporter_port: 9115

deploy_dir: "/opt/tidb-deploy/monitored-9100"

data_dir: "/data/tidb-data/monitored-9100"

log_dir: "/opt/tidb-deploy/monitored-9100/log"

server_configs:

tidb:

log.slow-threshold: 300

tikv:

readpool.storage.use-unified-pool: false

readpool.coprocessor.use-unified-pool: true

pd:

replication.enable-placement-rules: true

replication.location-labels: ["zone","idc","host"]

replication.isolation-level: "host"

tiflash:

logger.level: "info"

pd_servers:

- host: 192.168.68.102

name: "pd1"

client_port: 2379

peer_port: 2380

deploy_dir: "/opt/tidb-deploy/pd-2379"

data_dir: "/data/tidb-data/pd-2379"

log_dir: "/opt/tidb-deploy/pd-2379/log"

tidb_servers:

- host: 192.168.68.102

port: 4000

status_port: 10080

deploy_dir: "/opt/tidb-deploy/tidb-4000"

log_dir: "/opt/tidb-deploy/tidb-4000/log"

tikv_servers:

- host: 192.168.68.102

port: 20161

status_port: 20181

deploy_dir: "/opt/tidb-deploy/tikv-20161"

data_dir: "/data1/tidb-data/tikv-20161"

log_dir: "/opt/tidb-deploy/tikv-20161/log"

config:

server.labels: { zone: "zone2", idc: "idc1", host: "host1" }

- host: 192.168.68.102

port: 20162

status_port: 20182

deploy_dir: "/opt/tidb-deploy/tikv-20162"

data_dir: "/data2/tidb-data/tikv-20162"

log_dir: "/opt/tidb-deploy/tikv-20162/log"

config:

server.labels: { zone: "zone2", idc: "idc1", host: "host2" }

- host: 192.168.68.102

port: 20163

status_port: 20183

deploy_dir: "/opt/tidb-deploy/tikv-20163"

data_dir: "/data3/tidb-data/tikv-20163"

log_dir: "/opt/tidb-deploy/tikv-20163/log"

config:

server.labels: { zone: "zone2", idc: "idc1", host: "host3" }

tiflash_servers:

- host: 192.168.68.102

tcp_port: 9000

flash_service_port: 3930

flash_proxy_port: 20170

flash_proxy_status_port: 20292

metrics_port: 8234

deploy_dir: "/opt/tidb-deploy/tiflash-9000"

data_dir: "/data/tidb-data/tiflash-9000"

log_dir: "/opt/tidb-deploy/tiflash-9000/log"

monitoring_servers:

- host: 192.168.68.102

port: 9090

deploy_dir: "/opt/tidb-deploy/prometheus-8249"

data_dir: "/data/tidb-data/prometheus-8249"

log_dir: "/opt/tidb-deploy/prometheus-8249/log"

grafana_servers:

- host: 192.168.68.102

port: 3000

deploy_dir: /opt/tidb-deploy/grafana-3000

alertmanager_servers:

- host: 192.168.68.102

web_port: 9093

cluster_port: 9094

deploy_dir: "/opt/tidb-deploy/alertmanager-9093"

data_dir: "/data/tidb-data/alertmanager-9093"

log_dir: "/opt/tidb-deploy/alertmanager-9093/log"

cdc_servers:

- host: 192.168.68.102

port: 8300

gc-ttl: 86400

deploy_dir: "/opt/tidb-deploy/cdc-8300"

data_dir: "/data/tidb-data/cdc-8300"

log_dir: "/opt/tidb-deploy/cdc-8300/log"

迁移全量数据

-

关闭 GC。为了保证增量迁移过程中新写入的数据不丢失,在开始备份之前,需要关闭上游集群的垃圾回收 (GC) 机制,以确保系统不再清理历史数据。执行如下命令关闭 GC:

mysql> SET GLOBAL tidb_gc_enable=FALSE; Query OK, 0 rows affected (0.01 sec)查询 tidb_gc_enable 的取值,判断 GC 是否已关闭:

mysql> SELECT @@global.tidb_gc_enable; +-------------------------+ | @@global.tidb_gc_enable | +-------------------------+ | 0 | +-------------------------+ 1 row in set (0.00 sec) -

备份数据。在上游集群中执行 BACKUP 语句备份数据(NFS):

mysql> backup database * to 'local:///data/nfsdata/tidb851a-backup/'; +----------------------------------------+--------+--------------------+---------------------+---------------------+ | Destination | Size | BackupTS | Queue Time | Execution Time | +----------------------------------------+--------+--------------------+---------------------+---------------------+ | local:///data/nfsdata/tidb851a-backup/ | 118560 | 459081695216795655 | 2025-06-30 12:28:07 | 2025-06-30 12:28:07 | +----------------------------------------+--------+--------------------+---------------------+---------------------+ 1 row in set (3.95 sec) -

备份语句提交成功后,TiDB 会返回关于备份数据的元信息,这里需要重点关注 BackupTS,它意味着该时间点之前数据会被备份,后边的教程中,本文将使用 BackupTS 作为数据校验截止时间和 TiCDC 增量扫描的开始时间。

-

恢复数据。在下游集群中执行 RESTORE 语句恢复数据:

mysql> use testdb; Database changed mysql> show tables; Empty set (0.00 sec) mysql> restore database * from 'local:///data/nfsdata/tidb851a-backup/'; +----------------------------------------+--------+--------------------+--------------------+---------------------+---------------------+ | Destination | Size | BackupTS | Cluster TS | Queue Time | Execution Time | +----------------------------------------+--------+--------------------+--------------------+---------------------+---------------------+ | local:///data/nfsdata/tidb851a-backup/ | 118560 | 459081695216795655 | 459081711796879364 | 2025-06-30 12:29:10 | 2025-06-30 12:29:10 | +----------------------------------------+--------+--------------------+--------------------+---------------------+---------------------+ 1 row in set (2.82 sec) mysql> show tables; +------------------+ | Tables_in_testdb | +------------------+ | t1 | | t2 | | t3 | | t4 | +------------------+ 4 rows in set (0.00 sec) -

(可选)校验数据。通过 sync-diff-inspector 工具,可以验证上下游数据在某个时间点的一致性。从上述备份和恢复命令的输出可以看到,上游集群备份的时间点为 431434047157698561,下游集群完成数据恢复的时间点为 431434141450371074。

sync_diff_inspector -C ./config.yaml关于 sync-diff-inspector 的配置方法,请参考配置文件说明。在本文中,相应的配置如下:

# Diff Configuration. ######################### Global config ######################### check-thread-count = 4 export-fix-sql = true check-struct-only = false ######################### Datasource config ######################### [data-sources] [data-sources.upstream] host = "192.168.68.101" # 替换为实际上游集群 ip port = 4000 user = "root" password = "root" snapshot = "459081695216795655" # 配置为实际的备份时间点 [data-sources.downstream] host = "192.168.68.102" # 替换为实际下游集群 ip port = 4000 user = "root" password = "root" snapshot = "459081695216795655" # 配置为实际的恢复时间点 ######################### Task config ######################### [task] output-dir = "./output" source-instances = ["upstream"] target-instance = "downstream" target-check-tables = ["*.*"]

迁移增量数据

-

部署 TiCDC。完成全量数据迁移后,就可以部署并配置 TiCDC 集群同步增量数据,实际生产集群中请参考 TiCDC 部署。本文在创建测试集群时,已经启动了一个 TiCDC 节点,因此可以直接进行 changefeed 的配置。

-

创建同步任务。创建 changefeed 配置文件并保存为 changefeed.toml。

[consistent] # 一致性级别,配置成 eventual 表示开启一致性复制 level = "eventual" # 使用 S3 来存储 redo log, 其他可选为 local, nfs storage = "local:///data/nfsdata/tidb851a-redo"在上游集群中(192.168.68.101),执行以下命令创建从上游到下游集群的同步链路:

tiup cdc cli changefeed create --server=http://192.168.68.101:8300 \ --sink-uri="mysql://root:root@192.168.68.102:4000" \ --changefeed-id="tidb851a-to-tidb851b" \ --start-ts="459081695216795655" \ --config=changefeed.toml以上命令中:

- --server:TiCDC 集群任意一节点的地址

- --sink-uri:同步任务下游的地址

- --start-ts:TiCDC 同步的起点,需要设置为实际的备份时间点(也就是第 2 步:迁移全量数据提到的 BackupTS)

-

更多关于 changefeed 的配置,请参考 TiCDC Changefeed 配置参数。

-

重新开启 GC。TiCDC 可以保证未同步的历史数据不会被回收。因此,创建完从上游到下游集群的 changefeed 之后,就可以执行如下命令恢复集群的垃圾回收功能。详情请参考 TiCDC GC safepoint 的完整行为。执行如下命令打开 GC:

mysql> SET GLOBAL tidb_gc_enable=TRUE; Query OK, 0 rows affected (0.03 sec)查询 tidb_gc_enable 的取值,判断 GC 是否已开启:

mysql> SELECT @@global.tidb_gc_enable; +-------------------------+ | @@global.tidb_gc_enable | +-------------------------+ | 1 | +-------------------------+ 1 row in set (0.00 sec)

验证数据同步

在主用集群上执行DM后,查询备用集群数据是否同步。

#主

mysql> use testdb;

mysql> insert into t4 values(2,'testcdc');

#备

mysql> use testdb;

mysql> select * from t4;

同步任务操作任务参考:

#查看同步任务

tiup cdc cli changefeed list --server=http://192.168.68.101:8300

#查询特定同步任务

tiup cdc cli changefeed query --server=http://192.168.68.101:8300 --changefeed-id=tidb851a-to-tidb851b

tiup cdc cli changefeed query -s --server=http://192.168.68.101:8300 --changefeed-id=tidb851a-to-tidb851b

#停止同步任务

tiup cdc cli changefeed pause --server=http://192.168.68.101:8300 --changefeed-id=tidb851a-to-tidb851b

#恢复同步任务

tiup cdc cli changefeed resume --server=http://192.168.68.101:8300 --changefeed-id tidb851a-to-tidb851b

#删除同步任务

tiup cdc cli changefeed remove --server=http://192.168.68.101:8300 --changefeed-id tidb851a-to-tidb851b

MinIO常用相关命令

#连接minio

mc alias set myminio http://192.168.68.100:9000 admin Admin@123

mc admin info myminio

#创建存储桶

mc mb backup/tidb851a20250708

#查看存储桶

mc ls myminio

#删除没有文件的bucket=> mc rb 链接名/存储桶

mc rb test/test

# 删除有文件的bucket => mc rb 链接名/存储桶 --force

mc rb test/test --force