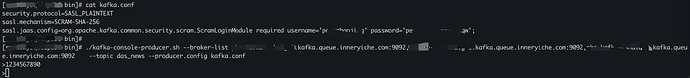

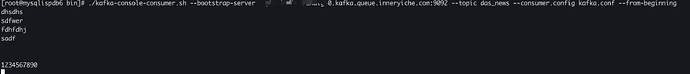

kafka认证方式,没有证书:

security.protocol=SASL_PLAINTEXT

sasl.mechanism=SCRAM-SHA-256

sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required username=‘user’ password=‘password’;

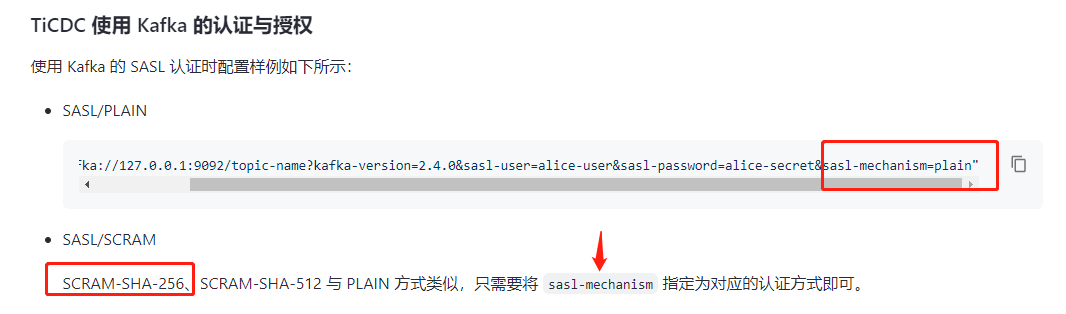

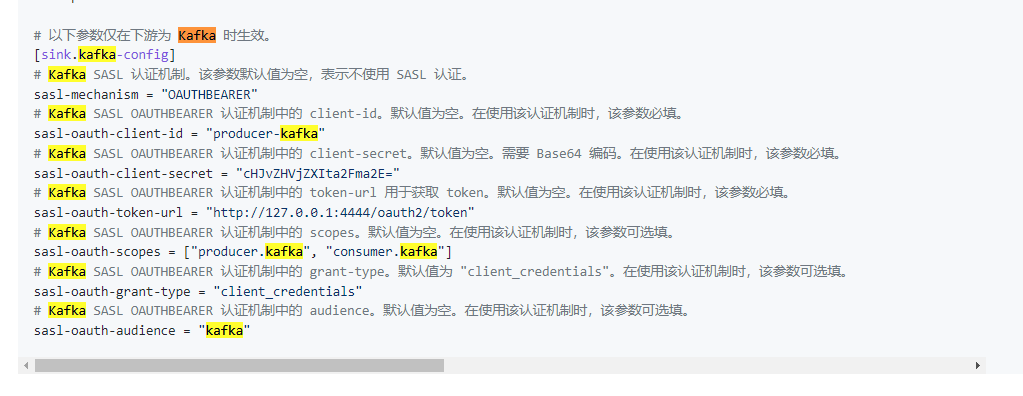

命令:tiup ctl:v6.1.3 cdc changefeed create --pd=http://10.24.7.1:2379 --sink-uri=“kafka://kafka-rshaig1-0.kafka.com:9092,kafka-rshaig1-1.kafka.com:9092,kafka-rshaig1-2.kafka.com:9092/das_news?kafka-version=2.7.1&sasl-user=user&sasl-password=password&sasl-mechanism=SCRAM-SHA-256&partition-num=3&max-message-bytes=10485760&replication-factor=3&protocol=canal-json” --changefeed-id=“changefeed-vv1” --config=/home/tidb/changefeed-vv1.toml

返回信息:

Starting component ctl: /home/tidb/.tiup/components/ctl/v6.1.3/ctl cdc changefeed create --pd=http://10.24.7.1:2379 --sink-uri=kafka://kafka-rshaig1-0.kafka.com:9092,kafka-rshaig1-1.kafka.com:9092,kafka-rshaig1-2.kafka.com:9092/das_news?kafka-version=2.7.1&sasl-user=user&sasl-password=password&sasl-mechanism=SCRAM-SHA-256&partition-num=3&max-message-bytes=10485760&replication-factor=3&protocol=canal-json --changefeed-id=changefeed-vv1 --config=/home/tidb/changefeed-vv1.toml

[2023/07/10 11:03:22.674 +08:00] [WARN] [sink.go:167] [“protocol is specified in both sink URI and config filethe value in sink URI will be usedprotocol in sink URI:canal-json, protocol in config file:default”]

[WARN] some tables are not eligible to replicate, []model.TableName{model.TableName{Schema:“news_v8”, Table:“news_class”, TableID:0, IsPartition:false}}

Could you agree to ignore those tables, and continue to replicate [Y/N]

Y

[2023/07/10 11:03:24.971 +08:00] [WARN] [sink.go:167] [“protocol is specified in both sink URI and config filethe value in sink URI will be usedprotocol in sink URI:canal-json, protocol in config file:canal-json”]

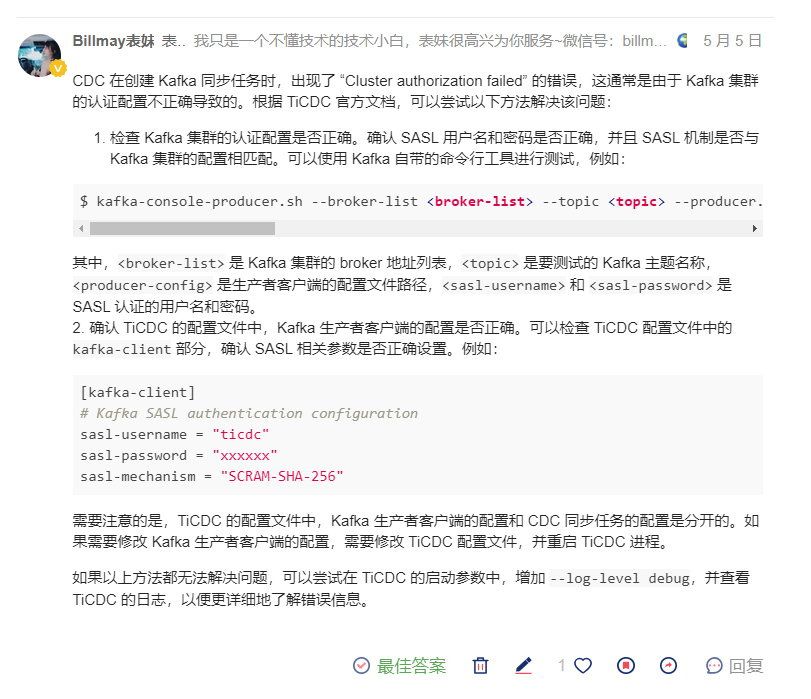

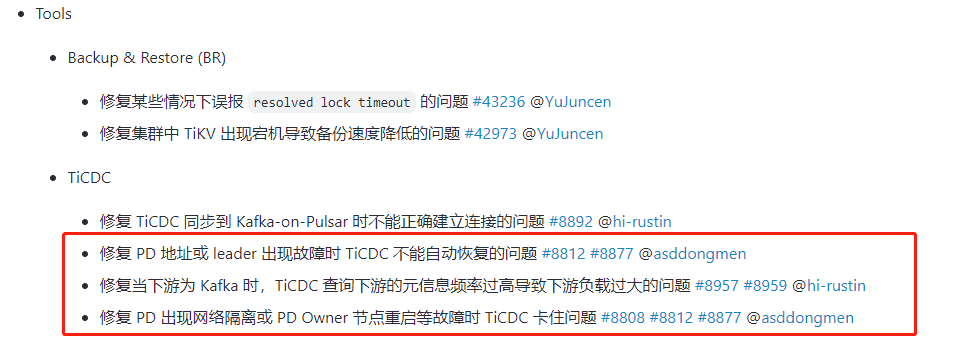

Error: [CDC:ErrKafkaNewSaramaProducer]new sarama producer: Cluster authorization failed.

Usage:

cdc cli changefeed create [flags]

Flags:

-c, --changefeed-id string Replication task (changefeed) ID

–config string Path of the configuration file

–cyclic-filter-replica-ids uints (Experimental) Cyclic replication filter replica ID of changefeed (default [])

–cyclic-replica-id uint (Experimental) Cyclic replication replica ID of changefeed

–cyclic-sync-ddl (Experimental) Cyclic replication sync DDL of changefeed (default true)

–disable-gc-check Disable GC safe point check

-h, --help help for create

–no-confirm Don’t ask user whether to ignore ineligible table

–opts key=value Extra options, in the key=value format

–schema-registry string Avro Schema Registry URI

–sink-uri string sink uri

–sort-engine string sort engine used for data sort (default “unified”)

–start-ts uint Start ts of changefeed

–sync-interval duration (Experimental) Set the interval for syncpoint in replication(default 10min) (default 10m0s)

–sync-point (Experimental) Set and Record syncpoint in replication(default off)

–target-ts uint Target ts of changefeed

–tz string timezone used when checking sink uri (changefeed timezone is determined by cdc server) (default “SYSTEM”)

Global Flags:

–ca string CA certificate path for TLS connection

–cert string Certificate path for TLS connection

-i, --interact Run cdc cli with readline

–key string Private key path for TLS connection

–log-level string log level (etc: debug|info|warn|error) (default “warn”)

–pd string PD address, use ‘,’ to separate multiple PDs (default “http://127.0.0.1:2379”)

[CDC:ErrKafkaNewSaramaProducer]new sarama producer: Cluster authorization failed.

Error: exit status 1

1.已排查域名,账号权限,均无问题,请问命令怎么改