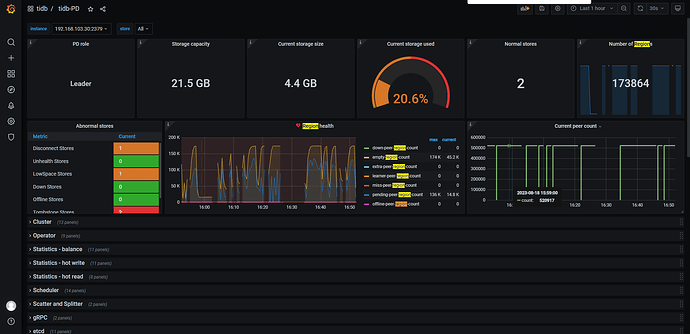

请问这些异常出现原因?怎么解决这个问题

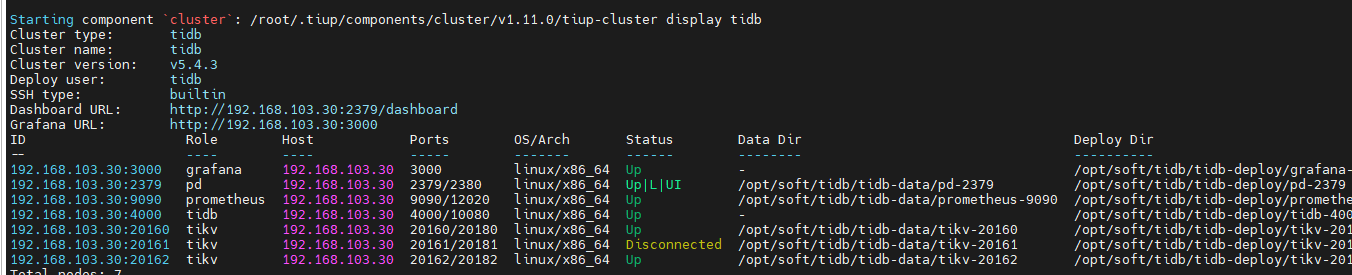

tiup cluster display tidb-xxx看一下集群当前组件的状态

估计是oom killer一直在kill。

https://docs.pingcap.com/zh/tidb/v5.4/hybrid-deployment-topology

混合部署的参数需要做调整。文档中提到的参数都要计算调整一下。

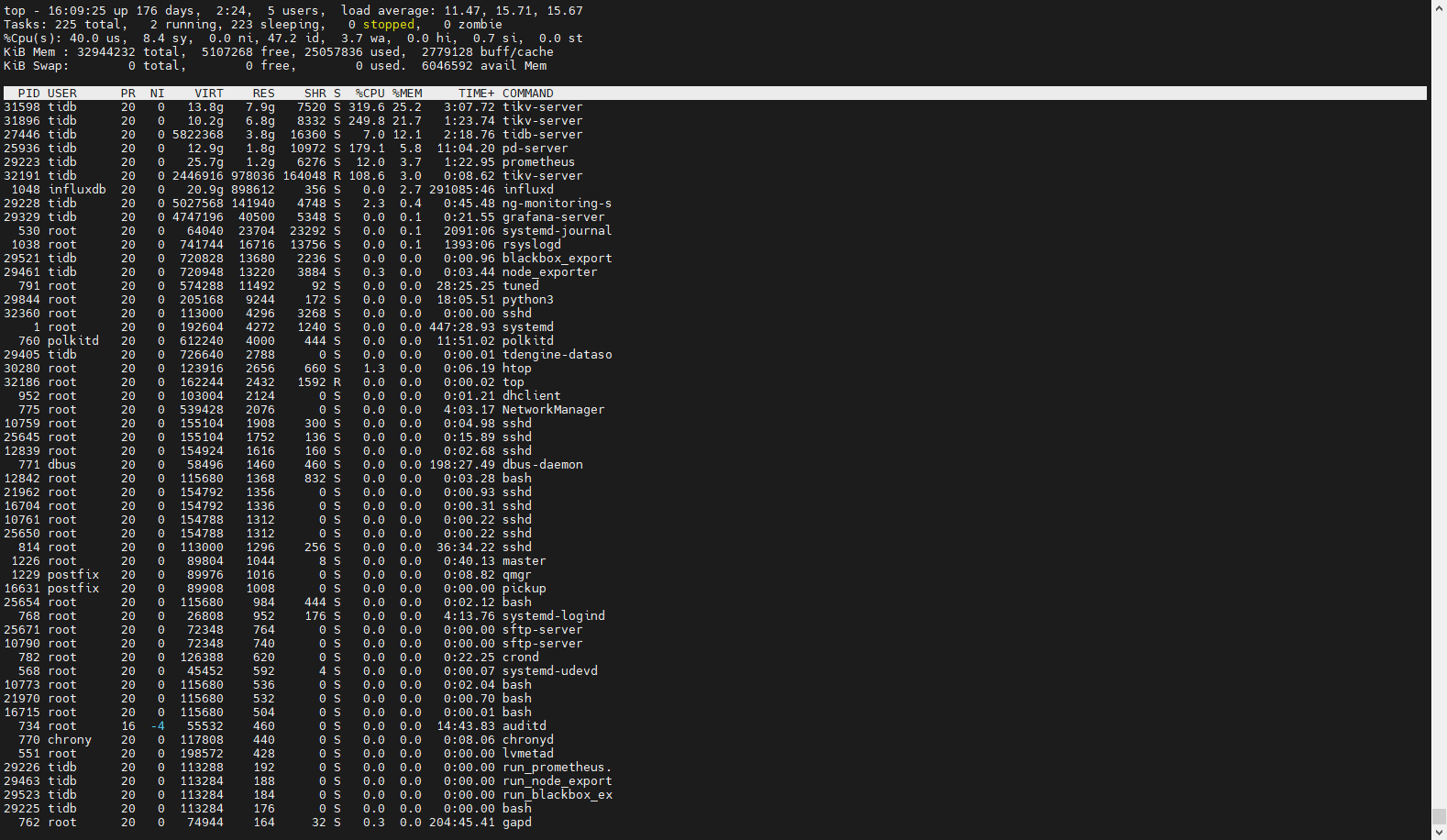

top命令看看占用内存情况 按shift+m按内存排序 ,观察下什么组件占用内存很大

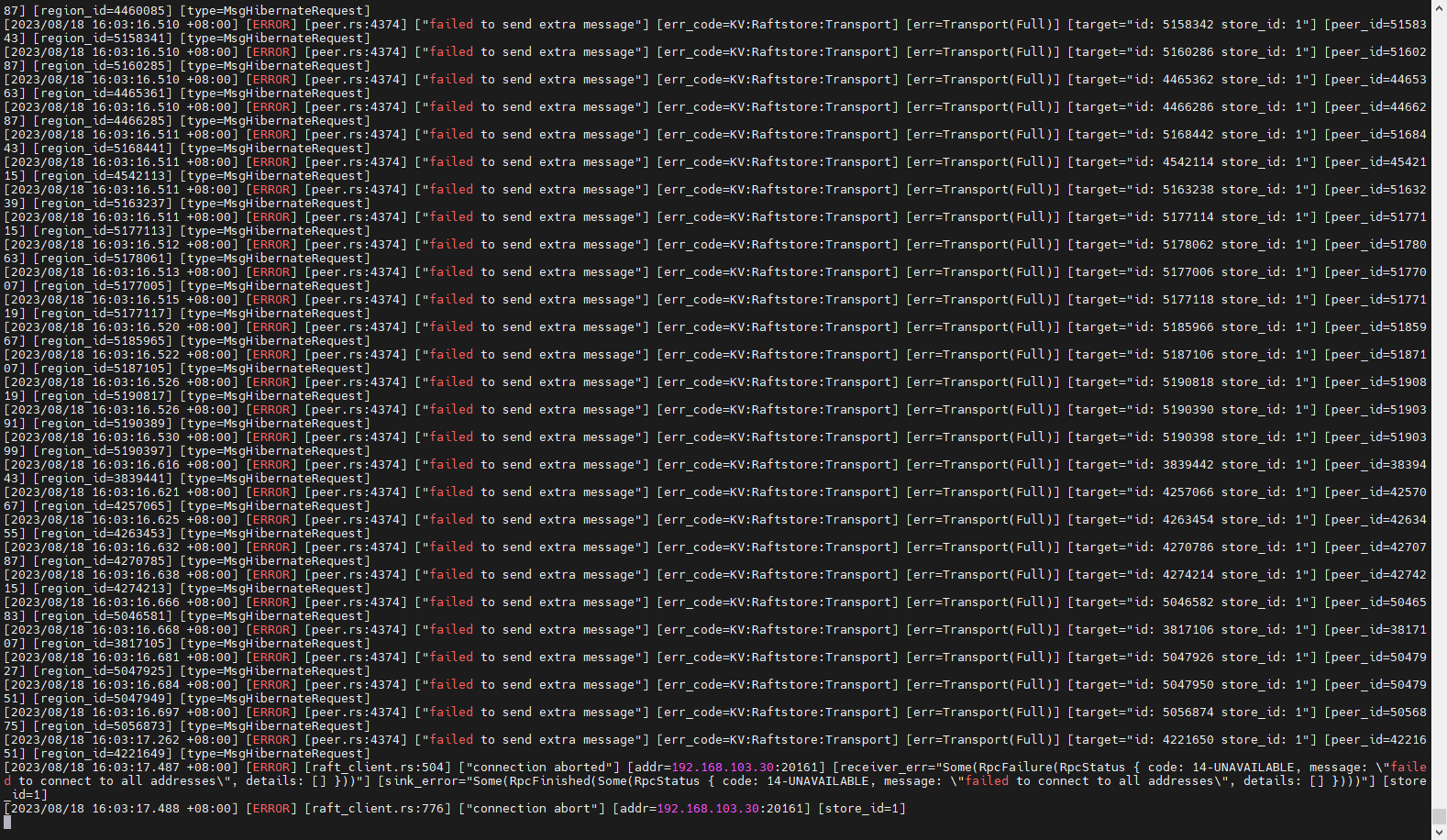

他一直在打印这些错误,我是按照配置文件配置的:

global:

2 user: tidb

3 ssh_port: 22

4 ssh_type: builtin

5 deploy_dir: /opt/soft/tidb/tidb-deploy

6 data_dir: /opt/soft/tidb/tidb-data

7 os: linux

8 monitored:

9 node_exporter_port: 9100

10 blackbox_exporter_port: 9115

11 deploy_dir: /opt/soft/tidb/tidb-deploy/monitor-9100

12 data_dir: /opt/soft/tidb/tidb-data/monitor-9100

13 log_dir: /opt/soft/tidb/tidb-deploy/monitor-9100/log

14 server_configs:

15 tidb:

16 log.file.max-backups: 7

17 log.level: error

18 log.slow-threshold: 300

19 tikv:

20 log.file.max-backups: 7

21 log.file.max-days: 7

22 log.level: error

23 raftstore.capacity: 10G

24 readpool.coprocessor.use-unified-pool: true

25 readpool.storage.use-unified-pool: true

26 storage.block-cache.capacity: 5G

27 storage.block-cache.shared: true

28 pd:

29 log.file.max-backups: 7

30 log.file.max-days: 1

31 log.level: error

32 replication.enable-placement-rules: true

33 replication.location-labels:

34 - host

35 tidb_dashboard: {}

36 tiflash:

37 logger.level: info

38 tiflash-learner: {}

39 pump: {}

40 drainer: {}

41 cdc: {}

42 kvcdc: {}

43 grafana: {}

44 tidb_servers:

45 - host: 192.168.103.30

46 ssh_port: 22

47 port: 4000

48 status_port: 10080

49 deploy_dir: /opt/soft/tidb/tidb-deploy/tidb-4000

50 log_dir: /opt/soft/tidb/tidb-deploy/tidb-4000/log

51 arch: amd64

52 os: linux

53 tikv_servers:

54 - host: 192.168.103.30

55 ssh_port: 22

56 port: 20160

57 status_port: 20180

58 deploy_dir: /opt/soft/tidb/tidb-deploy/tikv-20160

59 data_dir: /opt/soft/tidb/tidb-data/tikv-20160

log_dir: /opt/soft/tidb/tidb-deploy/tikv-20160/log

61 config:

62 server.labels:

63 host: logic-host-1

64 arch: amd64

65 os: linux

66 - host: 192.168.103.30

67 ssh_port: 22

68 port: 20161

69 status_port: 20181

70 deploy_dir: /opt/soft/tidb/tidb-deploy/tikv-20161

71 data_dir: /opt/soft/tidb/tidb-data/tikv-20161

72 log_dir: /opt/soft/tidb/tidb-deploy/tikv-20161/log

73 config:

74 server.labels:

75 host: logic-host-1

76 arch: amd64

77 os: linux

78 - host: 192.168.103.30

79 ssh_port: 22

80 port: 20162

81 status_port: 20182

82 deploy_dir: /opt/soft/tidb/tidb-deploy/tikv-20162

83 data_dir: /opt/soft/tidb/tidb-data/tikv-20162

84 log_dir: /opt/soft/tidb/tidb-deploy/tikv-20162/log

85 config:

86 server.labels:

87 host: logic-host-1

88 arch: amd64

89 os: linux

90 tiflash_servers: []

91 pd_servers:

92 - host: 192.168.103.30

93 ssh_port: 22

94 name: pd-192.168.103.30-2379

95 client_port: 2379

96 peer_port: 2380

97 deploy_dir: /opt/soft/tidb/tidb-deploy/pd-2379

98 data_dir: /opt/soft/tidb/tidb-data/pd-2379

99 log_dir: /opt/soft/tidb/tidb-deploy/pd-2379/log

100 arch: amd64

101 os: linux

102 monitoring_servers:

103 - host: 192.168.103.30

104 ssh_port: 22

105 port: 9090

106 ng_port: 12020

107 deploy_dir: /opt/soft/tidb/tidb-deploy/prometheus-9090

108 data_dir: /opt/soft/tidb/tidb-data/prometheus-9090

109 log_dir: /opt/soft/tidb/tidb-deploy/prometheus-9090/log

110 external_alertmanagers: []

111 arch: amd64

112 os: linux

113 grafana_servers:

114 - host: 192.168.103.30

115 ssh_port: 22

116 port: 3000

117 deploy_dir: /opt/soft/tidb/tidb-deploy/grafana-3000

118 arch: amd64

119 os: linux

120 username: admin

121 password: admin

122 anonymous_enable: false

123 root_url: ""

124 domain: ""

机器32G内存吗,storage.block-cache.capacity: 5G还是偏大,再调小点试试吧

直接先set tikv config storage.block-cache.capacity=3G;先让他别oom了,你设置5G,每个tikv能总内存能占到12G,你32G内存不够用会一直杀tikv

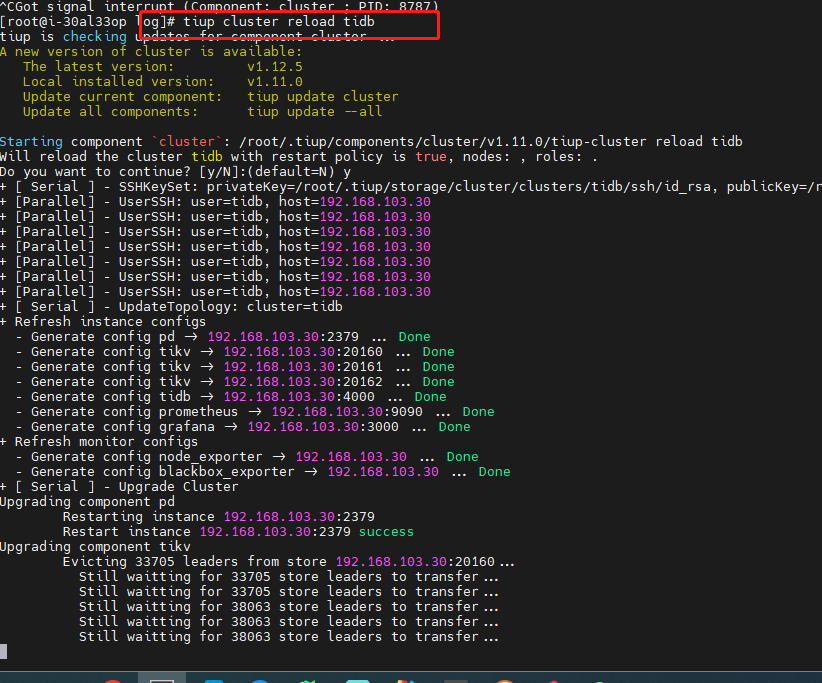

单机多节点混布,可以参考这个文档设置一下资源限制。

https://docs.pingcap.com/zh/tidb/stable/three-nodes-hybrid-deployment#三节点混合部署的最佳实践

限制不住的~ ![]()

![]()

![]()

![]()

资源不足,多给点

啊?按文档设置之后也限制不住么?是不是新版本才能完美的资源隔离?

新版本是软控制了,有资源池的概念,所有的资源使用的情况,不会超过资源池的上限,会比较有效果

但是控制难度和易用性还不够,,只能等

1 个赞