【TiDB 版本】8.5.1

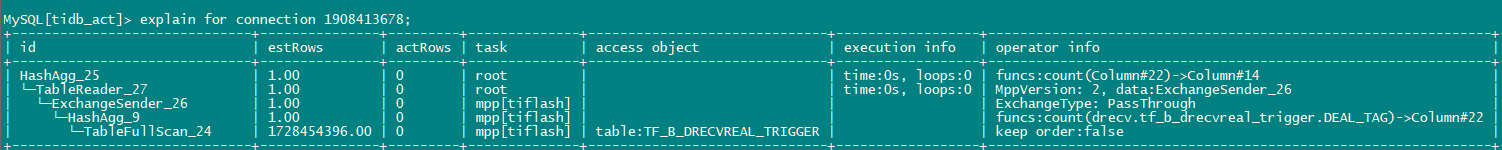

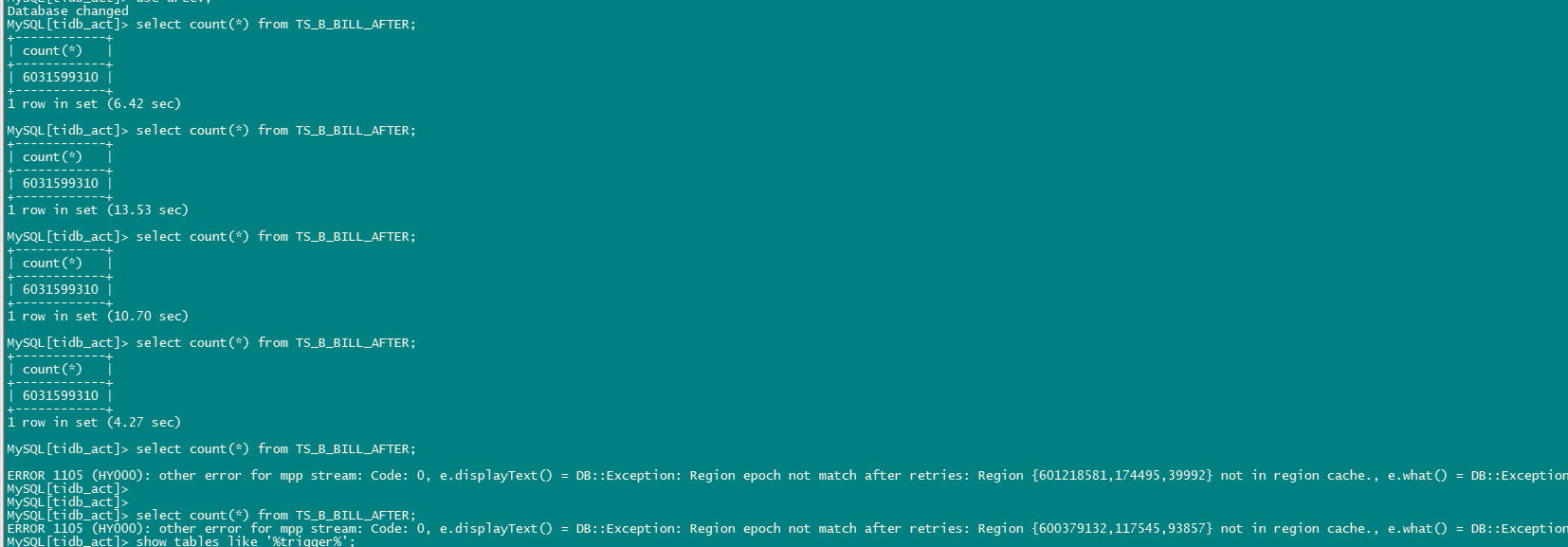

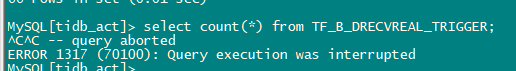

简单的count(*)查询,之前是2-3秒就能出结果,现在跑不出来,执行计划没问题走的tiflash

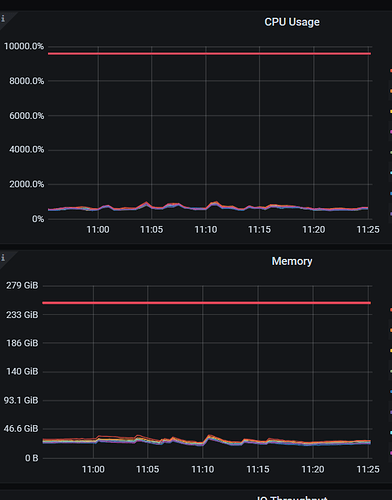

通过tiflash监控看 实际tiflash并没有执行

sql执行期间的tidb日志

[2025/06/25 11:15:44.003 +08:00] [INFO] [batch_coprocessor.go:558] ["detecting available mpp stores"] [total=8] [alive=8]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=1] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.109:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=2] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.108:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=3] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.104:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=4] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.105:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=5] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.106:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=6] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.112:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=7] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.110:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:15:44.007 +08:00] [INFO] [local_mpp_coordinator.go:221] ["Dispatch mpp task"] [timestamp=458967310396555334] [ID=8] [QueryTs=1750821343998978354] [LocalQueryId=216] [ServerID=910] [address=10.172.147.111:3930] [plan="Table(tf_b_drecvreal_trigger)->HashAgg->Send(-1, )"] [mpp-version=2] [exchange-compression-mode=NONE] [GatherID=1] [resource_group=default]

[2025/06/25 11:16:42.537 +08:00] [INFO] [domain.go:3043] ["refreshServerIDTTL succeed"] [serverID=910] ["lease id"=7bcf95b146acd74d]

[2025/06/25 11:16:44.023 +08:00] [WARN] [expensivequery.go:153] [expensive_query] [cost_time=60.024901333s] [stats=TF_B_DRECVREAL_TRIGGER:458967272608497725] [conn=1908413682] [user=root] [txn_start_ts=458967310396555334] [mem_max="6360 Bytes (6.21 KB)"] [sql="SELECT COUNT(*) from drecv.tf_b_drecvreal_trigger"] [session_alias=] ["affected rows"=0]

[2025/06/25 11:17:44.122 +08:00] [WARN] [expensivequery.go:153] [expensive_query] [cost_time=120.124502499s] [stats=TF_B_DRECVREAL_TRIGGER:458967272608497725] [conn=1908413682] [user=root] [txn_start_ts=458967310396555334] [mem_max="6360 Bytes (6.21 KB)"] [sql="SELECT COUNT(*) from drecv.tf_b_drecvreal_trigger"] [session_alias=] ["affected rows"=0]

[2025/06/25 11:18:44.123 +08:00] [WARN] [expensivequery.go:153] [expensive_query] [cost_time=180.124710198s] [stats=TF_B_DRECVREAL_TRIGGER:458967272608497725] [conn=1908413682] [user=root] [txn_start_ts=458967310396555334] [mem_max="6360 Bytes (6.21 KB)"] [sql="SELECT COUNT(*) from drecv.tf_b_drecvreal_trigger"] [session_alias=] ["affected rows"=0]

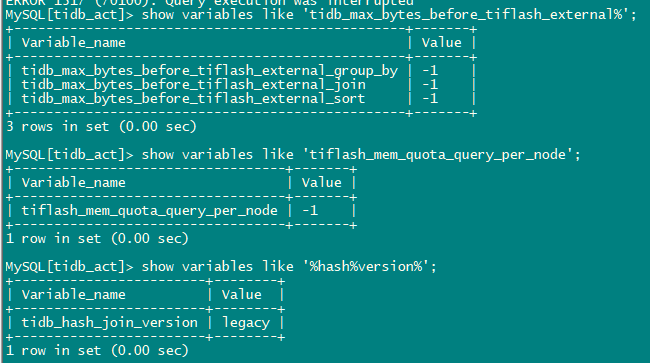

昨天有过几个内存参数调整 ,但都改回来了

(1) 变量

(2) 通过resource_control

memory_size 参数限制tiflash内存大小

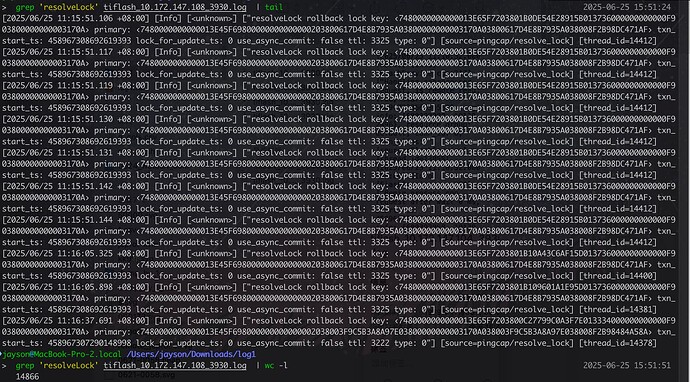

tiflash日志

log1.rar (56.9 MB)

log2.rar (55.7 MB)

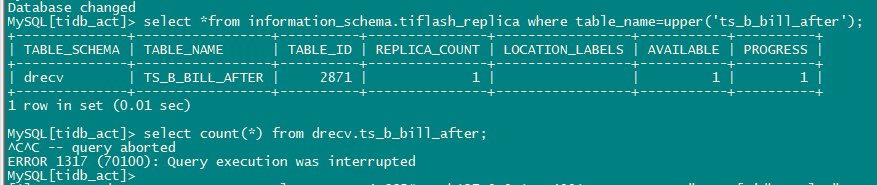

--------补充1----------------

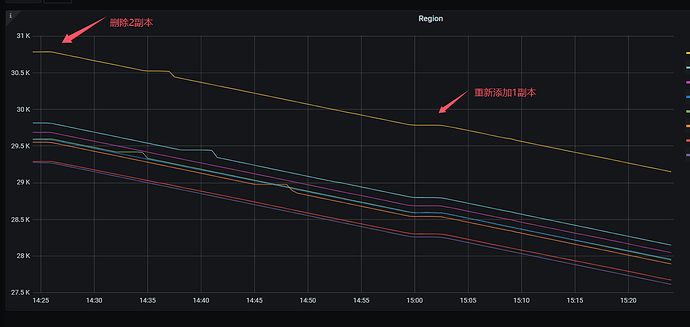

目前发现不是所有的表被卡住,几个通过DM同步的表查询会被卡住,没有DM同步的没啥问题,这几张表同步的数据量比较大 ,高峰时能导30W左右的tps ,尝试将其中一张表的2个tiflash副本删除然后重新添加1个副本,tiflash的region监控如下,可以看到在添加1个副本后,tiflash上的region副本数量一直在持续减少,应该是2小时的gc+大量数据变更导致region数较多。

tiflash副本添加完成后,查询依然卡住

停止该表的DM同步任务,然后查询能执行成功,但是重新启动DM任务 执行查询等待一会后报epoch错误,不像之前一样一直卡住,其他未按上述处理的DM同步表 仍然不能查询

目前这几张表每天都会做全量的日备份,每天一张表,最近查的都是备份表,这几张表没咋查过