【TiDB 使用环境】生产环境

【TiDB 版本】

【操作系统】Rocky Linux release 9.5 (Blue Onyx)

【部署方式】虚拟机部署

【集群数据量】1TB

【集群节点数】10

【问题复现路径】启动故障节点TiFlash失败,通过扩容、缩容系统已可用。

【遇到的问题:问题现象及影响】

TiFlash节点2故障,无法启动,应用程序报错“TiFlash server timeout”

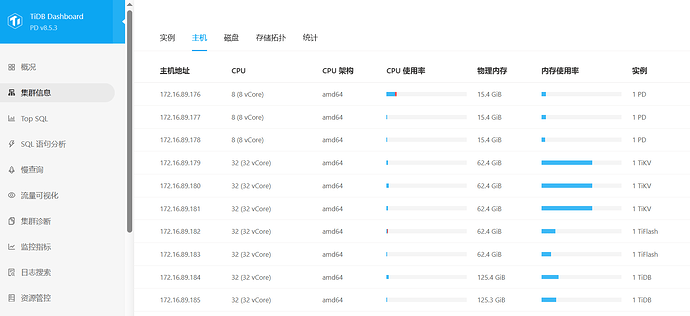

【资源配置】进入到 TiDB Dashboard -集群信息 (Cluster Info) -主机(Hosts) 截图此页面

【复制黏贴 ERROR 报错的日志】

tiflash_tikv.log

[2025/11/28 05:34:26.397 +08:00] [FATAL] [lib.rs:479] [“rocksdb background error. db: kv, reason: flush_no_wal, error: Corruption: Corrupted Key: Internal Key too small. Size=0

. “] [backtrace=” 0: tikv_util::set_panic_hook::{{closure}}\n at /workspace/source/tiflash/contrib/tiflash-proxy/components/tikv_util/src/lib.rs:478:18\n 1: <al

loc::boxed::Box<F,A> as core::ops::function::Fn>::call\n at /rustc/89e2160c4ca5808657ed55392620ed1dbbce78d1/library/alloc/src/boxed.rs:2029:9\n std::pani

cking::rust_panic_with_hook\n at /rustc/89e2160c4ca5808657ed55392620ed1dbbce78d1/library/std/src/panicking.rs:783:13\n 2: std::panicking::begin_panic_handler::{{c

losure}}\n at /rustc/89e2160c4ca5808657ed55392620ed1dbbce78d1/library/std/src/panicking.rs:657:13\n 3: std::sys_common::backtrace::__rust_end_short_backtrace\n

at /rustc/89e2160c4ca5808657ed55392620ed1dbbce78d1/library/std/src/sys_common/backtrace.rs:171:18\n 4: rust_begin_unwind\n at /rustc/89e2160c4ca5808657e

d55392620ed1dbbce78d1/library/std/src/panicking.rs:645:5\n 5: core::panicking::panic_fmt\n at /rustc/89e2160c4ca5808657ed55392620ed1dbbce78d1/library/core/src/pan

icking.rs:72:14\n 6: <engine_rocks::event_listener::RocksEventListener as rocksdb::event_listener::EventListener>::on_background_error\n at /workspace/source/tifl

ash/contrib/tiflash-proxy/components/engine_rocks/src/event_listener.rs:155:13\n 7: rocksdb::event_listener::on_background_error\n at /workspace/.cargo/git/checko

uts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/src/event_listener.rs:366:5\n 8: _ZN24crocksdb_eventlistener_t17OnBackgroundErrorEN7rocksdb21BackgroundErrorReasonEPNS0_6StatusE\n

at /workspace/.cargo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksdb_sys/crocksdb/c.cc:2502:5\n 9: _ZN7rocksdb12EventHelpers23NotifyOnBackgroundErro

rERKNSt3__16vectorINS1_10shared_ptrINS_13EventListenerEEENS1_9allocatorIS5_EEEENS_21BackgroundErrorReasonEPNS_6StatusEPNS_17InstrumentedMutexEPb\n at /workspace/.ca

rgo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksdb_sys/rocksdb/db/event_helpers.cc:62:15\n 10: _ZN7rocksdb12ErrorHandler17HandleKnownErrorsERKNS_6StatusENS_21B

ackgroundErrorReasonE\n at /workspace/.cargo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksdb_sys/rocksdb/db/error_handler.cc:339:5\n 11: _ZN7rocksdb

12ErrorHandler10SetBGErrorERKNS_6StatusENS_21BackgroundErrorReasonE\n at /workspace/.cargo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksdb_sys/rocksd

b/db/error_handler.cc:495:12\n 12: _ZN7rocksdb6DBImpl25FlushMemTableToOutputFileEPNS_16ColumnFamilyDataERKNS_16MutableCFOptionsEPbPNS_10JobContextENS_11FlushReasonEPNS_19Super

VersionContextERNSt3__16vectorImNSC_9allocatorImEEEEmPNS_15SnapshotCheckerEPNS_9LogBufferENS_3Env8PriorityE\n 13: _ZN7rocksdb6DBImpl27FlushMemTablesToOutputFilesERKNS_10autove

ctorINS0_10BGFlushArgELm8EEEPbPNS_10JobContextEPNS_9LogBufferENS_3Env8PriorityE\n at /workspace/.cargo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksd

b_sys/rocksdb/db/db_impl/db_impl_compaction_flush.cc:450:14\n 14: _ZN7rocksdb6DBImpl15BackgroundFlushEPbPNS_10JobContextEPNS_9LogBufferEPNS_11FlushReasonES1_NS_3Env8PriorityE

n at /workspace/.cargo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksdb_sys/rocksdb/db/db_impl/db_impl_compaction_flush.cc:3259:14\n 15: _ZN7rocksdb6

DBImpl19BackgroundCallFlushENS_3Env8PriorityE\n at /workspace/.cargo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksdb_sys/rocksdb/db/db_impl/db_impl_c

ompaction_flush.cc:3304:9\n 16: _ZN7rocksdb14ThreadPoolImpl4Impl8BGThreadEm\n at /usr/local/bin/…/include/c++/v1/__functional/function.h:517:16\n 17: _ZN7rocksdb

14ThreadPoolImpl4Impl15BGThreadWrapperEPv\n at /workspace/.cargo/git/checkouts/rust-rocksdb-a9a28e74c6ead8ef/2bb1e4e/librocksdb_sys/rocksdb/util/threadpool_imp.cc:3

53:7\n 18: ZNSt3__114__thread_proxyB8ue170006INS_5tupleIJNS_10unique_ptrINS_15__thread_structENS_14default_deleteIS3_EEEEPFvPvEPN7rocksdb16BGThreadMetadataEEEEEES7_S7\n

at /usr/local/bin/…/include/c++/v1/__type_traits/invoke.h:340:25\n 19: start_thread\n 20: clone3\n”] [location=components/engine_rocks/src/event_listener.rs:155] [th

read_name=] [thread_id=439]

[2025/11/28 05:34:42.411 +08:00] [FATAL] [common.rs:188] [“panic_mark_file /data/tidb-data/tiflash-9000/flash/panic_mark_file exists, there must be something wrong with the db.

Do not remove the panic_mark_file and force the TiKV node to restart. Please contact TiKV maintainers to investigate the issue. If needed, use scale in and scale out to replac

e the TiKV node. https://docs.pingcap.com/tidb/stable/scale-tidb-using-tiup”] [thread_id=1]

【其他附件:截图/日志/监控】

通过 scale in 、scale out 替换 TiFlash 节点故障已处理完成。

待查明故障原因及如何规避该故障。