【TiDB 使用环境】测试环境

【TiDB 版本】8.5.1

【集群节点数】2个tidb3个tikv3个pd共六台服务器

【遇到的问题:问题现象及影响】

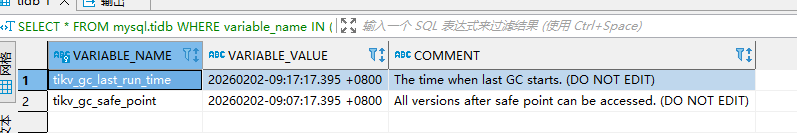

数据库表大表清理后,占用的空间不减少,通过SELECT * FROM mysql.tidb WHERE variable_name IN (‘tikv_gc_safe_point’, ‘tikv_gc_last_run_time’);查询结果正常,如下图:

,不正常有两个方面

1、通过tiup ctl:v8.5.1 pd -u http://192.168.181.57:2379 service-gc-safepoint show命令,查询的"gc_safe_point": 450204182675718148停留在2024-06-03 13:31:04.626 +0800 CST,不正常

2、tidb日志报错有间隙,日志如下:

[2026/02/03 15:08:17.464 +08:00] [INFO] [gc_worker.go:415] [“starts the whole job”] [category=“gc worker”] [uuid=67021f41a540018] [safePoint=464021591790714880] [concurrency=3]

[2026/02/03 15:08:17.464 +08:00] [INFO] [gc_worker.go:1201] [“start resolve locks”] [category=“gc worker”] [uuid=67021f41a540018] [safePoint=464021591790714880] [concurrency=3]

[2026/02/03 15:08:17.464 +08:00] [INFO] [range_task.go:167] [“range task started”] [name=resolve-locks-runner] [startKey=] [endKey=] [concurrency=3]

[2026/02/03 15:08:28.414 +08:00] [INFO] [domain.go:345] [“diff load InfoSchema success”] [isV2=false] [currentSchemaVersion=269919] [neededSchemaVersion=269920] [“elapsed time”=2.184694ms] [gotSchemaVersion=269920] [phyTblIDs=“[454052,454054]”] [actionTypes=“[11,11]”] [diffTypes=“["truncate table"]”]

[2026/02/03 15:08:28.421 +08:00] [INFO] [domain.go:1079] [“mdl gets lock, update self version to owner”] [jobID=454055] [version=269920]

[2026/02/03 15:09:06.978 +08:00] [WARN] [backoff.go:179] [“pdRPC backoffer.maxSleep 40000ms is exceeded, errors:\nPD returned regions have gaps, limit: 128 at 2026-02-03T15:08:59.959162437+08:00\nPD returned regions have gaps, limit: 128 at 2026-02-03T15:09:02.843258856+08:00\nPD returned regions have gaps, limit: 128 at 2026-02-03T15:09:05.214818078+08:00\ntotal-backoff-times: 19, backoff-detail: pdRPC:19, maxBackoffTimeExceeded: true, maxExcludedTimeExceeded: false\nlongest sleep type: pdRPC, time: 41626ms”]

[2026/02/03 15:09:06.978 +08:00] [INFO] [range_task.go:223] [“range task try to get range end key failure”] [name=resolve-locks-runner] [startKey=] [endKey=] [loadRegionKey=74800000000003150d] [“cost time”=49.514091412s] [error=]

[2026/02/03 15:09:06.978 +08:00] [ERROR] [gc_worker.go:1219] [“resolve locks failed”] [category=“gc worker”] [uuid=67021f41a540018] [safePoint=464021591790714880] [error=]

[2026/02/03 15:09:06.978 +08:00] [ERROR] [gc_worker.go:750] [“resolve locks returns an error”] [category=“gc worker”] [uuid=67021f41a540018] [error=]

[2026/02/03 15:09:06.979 +08:00] [ERROR] [gc_worker.go:220] [runGCJob] [category=“gc worker”] [error=]

请大家帮忙看下问题出在哪里?如何解决?