sgjr

1

br备份失败,报错文件大小超过300MB,有参数控制么?报错如下

2026-02-25 15:01:10.696973272 +0800 HKT m=+129640.408508860 write error: write length 1184260248 exceeds maximum file size 314572800

【TiDB 使用环境】生产环境

【TiDB 版本】7.5.6

【部署方式】云上部署(什么云)/机器部署

【操作系统/CPU 架构/芯片详情】

【机器部署详情】CPU大小/内存大小/磁盘大小

【集群数据量】

【集群节点数】

【问题复现路径】做过哪些操作出现的问题

【遇到的问题:问题现象及影响】

【资源配置】进入到 TiDB Dashboard -集群信息 (Cluster Info) -主机(Hosts) 截图此页面

【复制黏贴 ERROR 报错的日志】

2026-02-25 15:01:10.696973272 +0800 HKT m=+129640.408508860 write error: write length 1184260248 exceeds maximum file size 314572800

【其他附件:截图/日志/监控】

Kongdom

(Kongdom)

2

没印象会限制大小,用哪个命令备份的?有更多备份日结吗?

没印象会限制大小,用哪个命令备份的?有更多备份日结吗?

sgjr

3

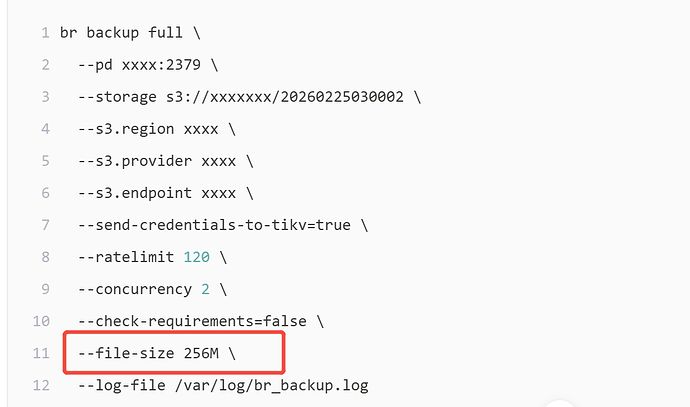

br backup full --pd xxxx:2379 --storage s3://xxxxxxx/20260225030002 --s3.region xxxx --s3.provider xxxx --s3.endpoint xxxx --send-credentials-to-tikv=true --ratelimit 120 --concurrency 2 --check-requirements=false --log-file /var/log/br_backup.log

2026-02-25 15:01:10.696973272 +0800 HKT m=+129640.408508860 write error: write length 1184260248 exceeds maximum file size 314572800

[2026/02/25 15:01:23.581 +08:00] [ERROR] [backup.go:55] ["failed to backup"] [error="rpc error: code = Canceled desc = context canceled"] [errorVerbose="rpc error: code = Canceled desc = context canceled\ngithub.com/tikv/pd/client.(*client).respForErr\n\t/root/go/pkg/mod/github.com/tikv/pd/client@v0.0.0-20250219063534-ff54072887c0/client.go:1602\ngithub.com/tikv/pd/client.(*client).GetAllStores\n\t/root/go/pkg/mod/github.com/tikv/pd/client@v0.0.0-20250219063534-ff54072887c0/client.go:1193\ngithub.com/pingcap/tidb/br/pkg/conn/util.GetAllTiKVStores\n\t/workspace/source/tidb/br/pkg/conn/util/util.go:48\ngithub.com/pingcap/tidb/br/pkg/conn.GetAllTiKVStoresWithRetry.func1\n\t/workspace/source/tidb/br/pkg/conn/conn.go:84\ngithub.com/pingcap/tidb/br/pkg/utils.WithRetry.func1\n\t/workspace/source/tidb/br/pkg/utils/retry.go:217\ngithub.com/pingcap/tidb/br/pkg/utils.WithRetryV2[...]\n\t/workspace/source/tidb/br/pkg/utils/retry.go:235\ngithub.com/pingcap/tidb/br/pkg/utils.WithRetry\n\t/workspace/source/tidb/br/pkg/utils/retry.go:216\ngithub.com/pingcap/tidb/br/pkg/conn.GetAllTiKVStoresWithRetry\n\t/workspace/source/tidb/br/pkg/conn/conn.go:81\ngithub.com/pingcap/tidb/br/pkg/backup.(*Client).BackupRange\n\t/workspace/source/tidb/br/pkg/backup/client.go:956\ngithub.com/pingcap/tidb/br/pkg/backup.(*Client).BackupRanges.func2\n\t/workspace/source/tidb/br/pkg/backup/client.go:915\ngithub.com/pingcap/tidb/br/pkg/utils.(*WorkerPool).ApplyOnErrorGroup.func1\n\t/workspace/source/tidb/br/pkg/utils/worker.go:76\ngolang.org/x/sync/errgroup.(*Group).Go.func1\n\t/root/go/pkg/mod/golang.org/x/sync@v0.5.0/errgroup/errgroup.go:75\nruntime.goexit\n\t/usr/local/go/src/runtime/asm_amd64.s:1650"] [stack="main.runBackupCommand\n\t/workspace/source/tidb/br/cmd/br/backup.go:55\nmain.newFullBackupCommand.func1\n\t/workspace/source/tidb/br/cmd/br/backup.go:144\ngithub.com/spf13/cobra.(*Command).execute\n\t/root/go/pkg/mod/github.com/spf13/cobra@v1.7.0/command.go:940\ngithub.com/spf13/cobra.(*Command).ExecuteC\n\t/root/go/pkg/mod/github.com/spf13/cobra@v1.7.0/command.go:1068\ngithub.com/spf13/cobra.(*Command).Execute\n\t/root/go/pkg/mod/github.com/spf13/cobra@v1.7.0/command.g

o:992\nmain.main\n\t/workspace/source/tidb/br/cmd/br/main.go:36\nruntime.main\n\t/usr/local/go/src/runtime/proc.go:267"]

[2026/02/25 15:01:23.581 +08:00] [ERROR] [main.go:38] ["br failed"] [error="rpc error: code = Canceled desc = context canceled"] [errorVerbose="rpc error: code = Canceled desc = context canceled\ngithub.com/tikv/pd/client.(*client).respForErr\n\t/root/go/pkg/mod/github.co

m/tikv/pd/client@v0.0.0-20250219063534-ff54072887c0/client.go:1602\ngithub.com/tikv/pd/client.(*client).GetAllStores\n\t/root/go/pkg/mod/github.com/tikv/pd/client@v0.0.0-20250219063534-ff54072887c0/client.go:1193\ngithub.com/pingcap/tidb/br/pkg/conn/util.GetAllTiKVStores\

n\t/workspace/source/tidb/br/pkg/conn/util/util.go:48\ngithub.com/pingcap/tidb/br/pkg/conn.GetAllTiKVStoresWithRetry.func1\n\t/workspace/source/tidb/br/pkg/conn/conn.go:84\ngithub.com/pingcap/tidb/br/pkg/utils.WithRetry.func1\n\t/workspace/source/tidb/br/pkg/utils/retry.g

o:217\ngithub.com/pingcap/tidb/br/pkg/utils.WithRetryV2[...]\n\t/workspace/source/tidb/br/pkg/utils/retry.go:235\ngithub.com/pingcap/tidb/br/pkg/utils.WithRetry\n\t/workspace/source/tidb/br/pkg/utils/retry.go:216\ngithub.com/pingcap/tidb/br/pkg/conn.GetAllTiKVStoresWithRe

try\n\t/workspace/source/tidb/br/pkg/conn/conn.go:81\ngithub.com/pingcap/tidb/br/pkg/backup.(*Client).BackupRange\n\t/workspace/source/tidb/br/pkg/backup/client.go:956\ngithub.com/pingcap/tidb/br/pkg/backup.(*Client).BackupRanges.func2\n\t/workspace/source/tidb/br/pkg/bac

kup/client.go:915\ngithub.com/pingcap/tidb/br/pkg/utils.(*WorkerPool).ApplyOnErrorGroup.func1\n\t/workspace/source/tidb/br/pkg/utils/worker.go:76\ngolang.org/x/sync/errgroup.(*Group).Go.func1\n\t/root/go/pkg/mod/golang.org/x/sync@v0.5.0/errgroup/errgroup.go:75\nruntime.go

exit\n\t/usr/local/go/src/runtime/asm_amd64.s:1650"] [stack="main.main\n\t/workspace/source/tidb/br/cmd/br/main.go:38\nruntime.main\n\t/usr/local/go/src/runtime/proc.go:267"]

Kongdom

(Kongdom)

6

自建的还是云的?百度了一下,可能是s3那边限制了。

随缘天空

(Ti D Ber Ivw R7o Pj)

7

加上file-size参数试试,BR备份时会把大文件切分,单个文件默认好像是256M,同时确认下s3服务有没有上传的限制

优先调整 Drainer 的并发 / 批次参数,减少单事务跨 Region 预写压力

Kongdom

(Kongdom)

13

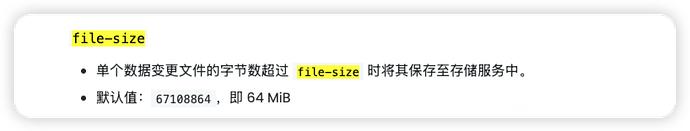

说的这个嘛?AI也是说要设置–file-size,但是官方文档中没有看到这个参数

独善其身

(Ti D Ber Bi Rqfz5 K)

14

8.5版本好像有个file-size参数限制备份片大小

随缘天空

(Ti D Ber Ivw R7o Pj)

16

可以加上对应参数(-F 500MiB或者–filesize 500MiB)执行命令试试,BR命令具体是否可以没验证过,不过dumpling工具的备份命令是可以设置文件大小参数的,如下是dumpling工具的基本命令:

tiup dumpling -u root -p {密码} -P 4000 -h 127.0.0.1 --filetype sql -o /xx/xxx -t 4 -r 200000 -F 500MiB -B {数据库} -L {日志文件}

随缘天空

(Ti D Ber Ivw R7o Pj)

17

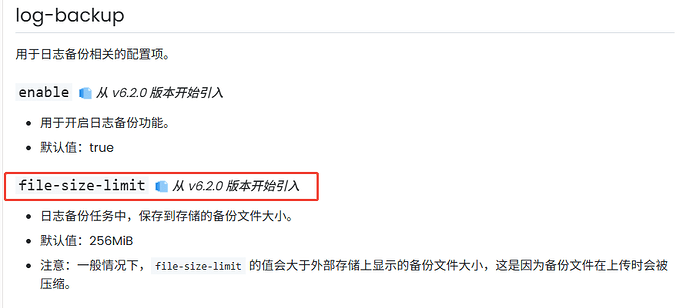

不是这个,这个是日志备份的参数,说的是BR快照备份的参数